The artificial intelligence landscape has officially entered its next era. Kicking off the highly anticipated event in San Jose this week, the NVIDIA GTC 2026 highlights have sent shockwaves through the tech industry. Standing before a crowd of tens of thousands, CEO Jensen Huang delivered a keynote that not only redefined the hardware baseline for enterprise computing but fundamentally altered financial expectations. The centerpiece of this transformation? The groundbreaking Vera Rubin GPU architecture and an unprecedented AI infrastructure revenue forecast that projects $1 trillion in cumulative sales by the end of 2027.

As the industry pivots aggressively from foundational model training to real-world, large-scale inference, computational demands have skyrocketed. NVIDIA's answer is a vertically integrated, rack-scale supercomputer designed explicitly for what Huang dubs the "fourth scaling law" of machine learning: agentic scaling, where AI systems communicate seamlessly with other AI agents.

The Dawn of Agentic AI Hardware: Introducing Vera Rubin

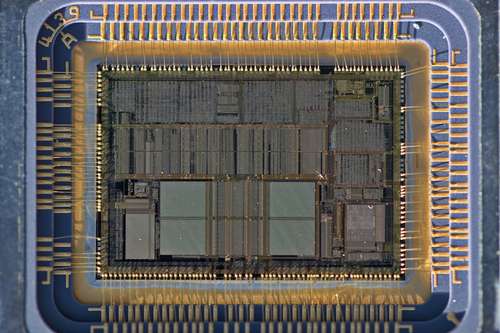

For the past two years, the Blackwell generation dominated data center build-outs. However, the Jensen Huang keynote 2026 made it clear that standalone chips are no longer sufficient for the future of computing. The newly unveiled Vera Rubin platform represents a paradigm shift toward cohesive, pod-scale factory designs optimized specifically for Agentic AI hardware.

At the heart of this new ecosystem is the Vera Rubin NVL72 rack. This engineering marvel unifies 72 Rubin GPUs and 36 Vera CPUs, intricately bound together by the next-generation NVLink 6 high-speed interconnect. This networking breakthrough effectively reduces data transfer latency between processors to the microsecond level. But the true star of the visual computing show is the leap in HBM4 memory performance.

By leveraging advanced architecture, the Rubin GPUs feature up to 288 GB of cutting-edge HBM4 memory, delivering a staggering 22 TB/s of aggregate bandwidth. Industry leaders like Micron have already begun volume shipments of HBM4, achieving over 11 Gbps pin speeds and greater than 20% power efficiency improvements. This hardware synergy enables the Rubin GPUs to achieve a blistering 10x throughput increase for real-time AI inference per watt compared to their Blackwell predecessors. This massive leap means enterprises can generate up to 15 times more tokens and support models ten times larger, facilitating rich, multi-agent interactions without human bottlenecking.

Diving Into the NVIDIA Vera CPU Specs

While graphics processing units traditionally steal the spotlight at GTC, the NVIDIA Vera CPU specs have captured the attention of data center architects worldwide. Moving away from standard general-purpose computing, the Vera CPU is the world's first central processing cluster built from the ground up to handle the multitasking parallelism required by autonomous agents.

Under the hood, the Vera processor boasts 88 custom "Olympus" cores. Utilizing NVIDIA Spatial Multithreading, each core can execute dual tasks simultaneously, ensuring consistent and ultra-low-latency performance in multi-tenant environments. Paired with advanced LPDDR5X memory, the CPU delivers a staggering 1.2 TB/s of bandwidth. This design effectively provides double the memory bandwidth at half the power consumption of traditional rack-scale CPUs.

For businesses deploying reinforcement learning and complex agentic workflows, the Vera CPU cluster translates to double the computing efficiency. A single rack can integrate 256 of these processors, supporting tens of thousands of autonomous agents running concurrently online.

A Staggering $1 Trillion AI Infrastructure Revenue Forecast

Beyond the silicon, the financial implications revealed at the conference were nothing short of historic. Huang issued an updated AI infrastructure revenue forecast, predicting that NVIDIA will generate over $1 trillion in cumulative AI revenue by 2027. This figure represents a massive doubling of the $500 billion target the company outlined just one year ago.

This explosive growth is driven by a staggering backlog of enterprise purchase orders and a fundamental market transition. Companies are no longer just experimenting with isolated machine learning models; they are actively deploying physical AI, integrating digital twins via platforms like the Omniverse DSX Blueprint, and scaling sovereign AI factories. Leading neo-cloud providers like CoreWeave and Nebius have already announced deep integrations with the new hardware, positioning themselves to deploy the purpose-built servers by the second half of this year.

Wall Street reacted swiftly to the announcement, with analysts noting that the unprecedented performance-per-watt of the architecture makes it the undisputed hardware of choice for scaling workloads, solidifying NVIDIA's market dominance.

Expanding the Ecosystem: Groq LPUs and Orbital Data Centers

NVIDIA's vision for 2026 extends far beyond traditional data centers. Following a strategic $20 billion asset acquisition last December, GTC 2026 marked the official integration of Groq's Language Processing Unit (LPU) technology into the NVIDIA stack. The new Groq 3 LPU is purpose-built to accelerate specific natural language inference tasks, offering developers unprecedented flexibility when combined with the Vera and Rubin architectures.

To operationalize these massive deployments, NVIDIA also rolled out the Vera Rubin DSX AI Factory reference design. This comprehensive suite includes modular applications like DSX Flex, which connects AI data centers directly to power-grid services to manage intense energy demands, and DSX Sim for validating sovereign setups through high-fidelity digital twins.

Perhaps the most futuristic announcement of the week was the introduction of the Vera Rubin Space Module. Designed for orbital data centers, this specialized module delivers up to 25 times more compute power than the H100 GPU. It enables commercial space companies to run advanced foundation models directly in orbit, processing massive streams of satellite data in real time without relying on high-latency downlinks to Earth.

As GTC 2026 continues, one thing is abundantly clear: the era of isolated processing is over. With the Vera Rubin platform, NVIDIA isn't just selling chips; they are architecting the foundational infrastructure for the next decade of intelligent, autonomous systems.